Hello everyone,

I have been looking for solution over a month now and I cannot find an answer to this. I have created a baseline dataset where I put Fatality data/Investment Plan data/etc. (all relevant information for dataset).

After it I decided to check how the fatalities will change if I change some road attributes (increase of speed, barriers). In respect of SRIP manual, I have copied baseline dataset and named it as scenario. All the baseline values remained the same (as it is written in SRIP manual). The only thing I changed is coding part. I have uploaded another excel file with changed coding for speed/road attributes.

As I understand, when selecting results and 2 databases (baseline and scenario), I should see the difference in fatalities between baseline and scenario. However, the fatality data remains the same for baseline and scenario databases. Star ratings changes, but not fatality data.

Not sure what is wrong, why fatality data does not change if I change the coding.

I would be very grateful if anyone can help me to solve that problem, since I’m pulling the hair out of my head without finding the problem.

1 Like

did you block auto-calibration? you must disable it before copying tje dataset and perhaps you can enable it again fter is it copied.

Hello Joan,

Thank you for the reply. Yes, the autocallication was turned off to gather/get the data from baseline. It copied without any errors. For the scenario project the Fatalities data is the same as for baseline, however if I upload updated/improved/corrected coding file into scenario project, the fatality estimations (under results page) is the same as baseline. All other result changes like investments, star ratings and etc., but fatality estimations remain the same.

Hi Dovydas

I face the same problem like you. How did you solved it. Please share.

Regards

Hi, sorry to see you’re having this problem.

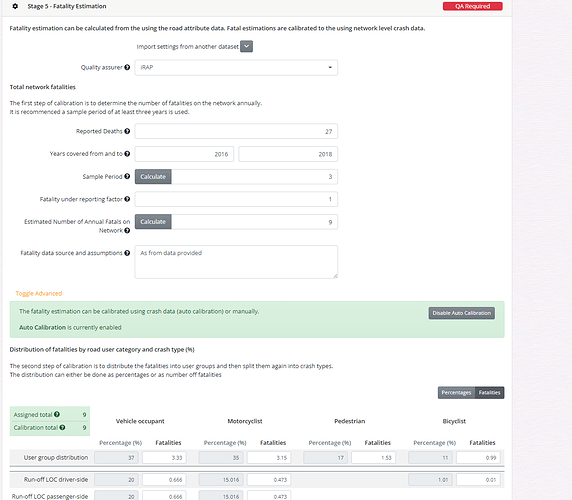

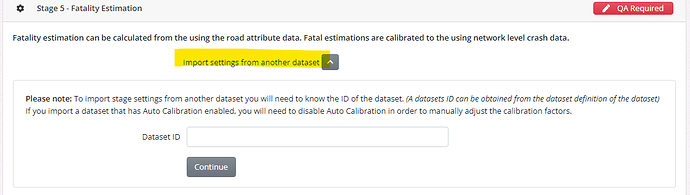

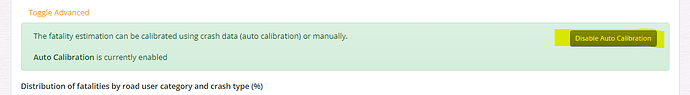

When you’re doing a scenario, it’s important in the second dataset - the one that you will be doing the testing in - to use the “import settings from another dataset” function, and also press the “disable autocalibration” button. This will mean that your second dataset will use the same calibration factors as the original dataset and so, when you change coding, the fatality estimations will change accordingly.

Hope this helps. You can see an example of this in practice in the SR4D training course: Training - iRAP

Greg

Dear Greg

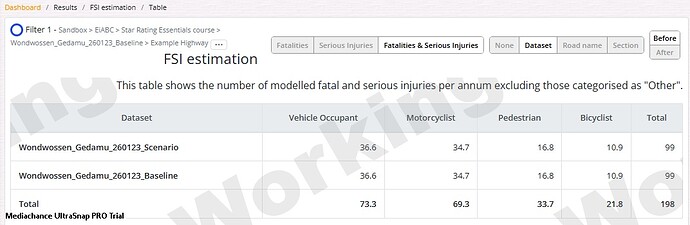

Thank you for your reply. I tried the procedure you mentioned and even tried to experiment with different scenarios that can have significant impact. (Eg. I changed both Operating speeds to 50Km/hr and reduce the AADT). The Star Rating changed significantly as expected but the total FSI for the “Before” condition is still the same in all scenarios. The FSI changed in the “After” Condition only as shown in screenshots below.

I was wondering how should the FSI can be different in Baseline & Scenario as the “Estimated Number of Annual Fatal on Network” and the Calibrations are the same in both cases. As we know the iRAP model is FSI distribution model than prediction model.

Please help me to identify my gap.

Regards

Hi, wondwossen

Please check whether GDP per capita, value of life multiplyer, value of serious injury multiplyer etc under Investment plan section is filled correctly. I think it will help. Thanks

Hi there,

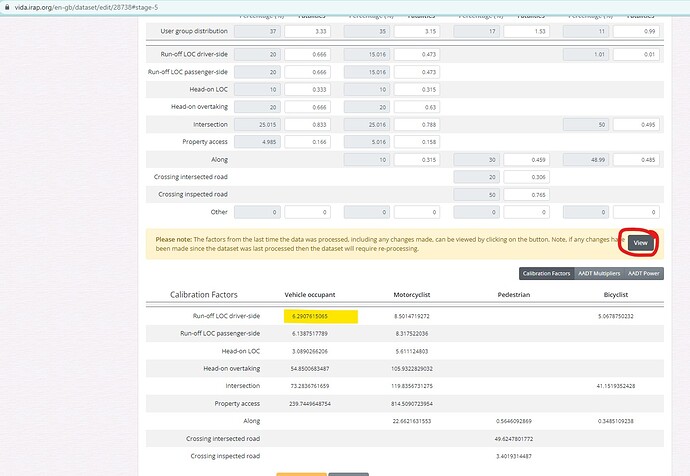

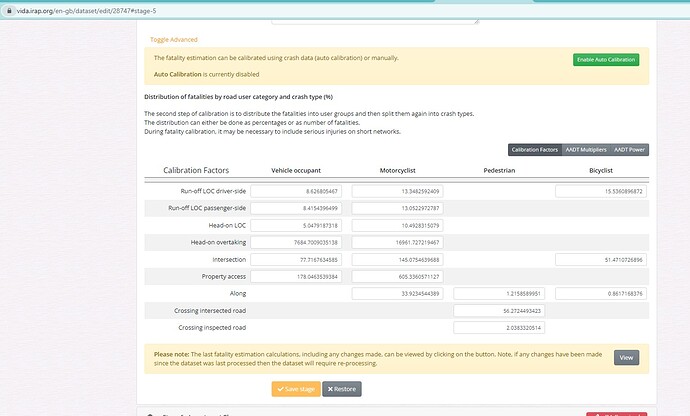

I took a look at your two datasets, and it seems like the calibration factors don’t match. To check the calibration for the baseline dataset, I clicked the “view” buttin the the fatality estimation section of the dataset set up. You can see in the first image below, for example, the calibration in for Run-off LOC driver-side for vehicle occupants is 6.2. In the scenario dataset, the corresponding number is 8.6.

Maybe what has happened is that you have reprocessed the baseline dataset after having linked the two dataset (just a guess!). So what i would try is:

- In the scenario dataset, try using the Import settings from another dataset again (ie import from dataset 28738). Ensure that auto calibration is disabled.

- Reprocess the scenario dataset

Maybe that will solve the problem - fingers crossed! If it doesn’t i can jump in and look further.

Cheers

Greg